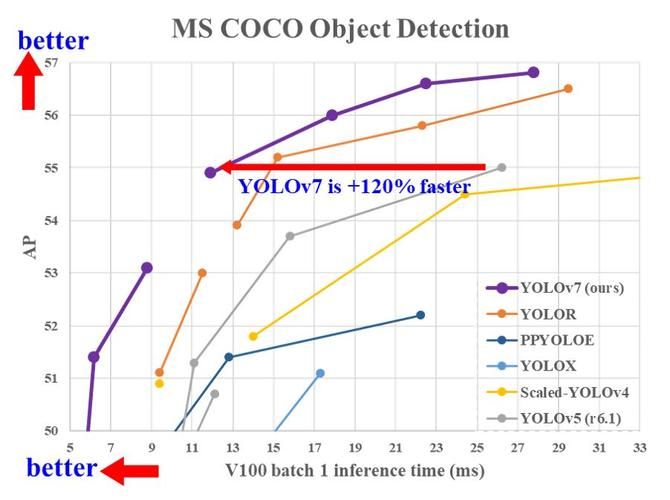

今天的深度学习方法专注于如何设计最合适的目标函数,以使模型的预测结果最接近真实情况。同时,必须设计一个合适的架构,以便为预测提供足够的信息。现有方法忽视了一个事实,即当输入数据经过逐层特征提取和空间转换时,会丢失大量信息。本文将深入探讨数据通过深度网络传输时出现的数据丢失的重要问题,即信息瓶颈和可逆函数。我们提出了可编程梯度信息(PGI)的概念,以应对深度网络实现多个目标所需的各种变化。PGI可以为目标任务提供完整的输入信息,以计算目标函数,从而获得可靠的梯度信息来更新网络权重。此外,基于梯度路径规划设计了一种新的轻量级网络架构——通用高效层聚合网络(GELAN)。GELAN的架构证实了PGI在轻量级模型上取得了优异的结果。我们在基于 MS COCO 数据集的目标检测上验证了提出的 GELAN 和 PGI。结果显示,GELAN 仅使用传统的卷积运算符,比基于深度卷积的最新方法实现了更好的参数利用率。PGI 可以用于各种模型,从轻量级到大型模型都适用。它可以用来获取完整的信息,使得从头开始训练的模型比使用大型数据集预训练的最新模型获得更好的结果,比较结果如图1所示。

文章地址:https://arxiv.org/pdf/2402.13616.pdf

文章贡献

-

创新的方法论:提出了可编程梯度信息(Programmable Gradient Information, PGI)的概念和通用高效层聚合网络(Generalized Efficient Layer Aggregation ***work, GELAN)的设计。这两项技术共同解决了深度学习模型中的信息丢失问题,特别是在对象检测这类复杂任务中。

-

高性能的对象检测模型:通过结合PGI和GELAN,开发了YOLOv9对象检测模型。该模型在保持轻量级和高效性的同时,显著提升了对象检测任务的准确性,超越了当前最先进的方法,如YOLOv5、YOLOv6、YOLOv7和YOLOv8等。

-

全面的实验验证:在标准的MS COCO数据集上进行了广泛的实验,验证了提出方法的有效性。实验结果不仅展示了YOLOv9在对象检测性能上的显著提升,还包括了对模型参数效率和计算效率的深入分析。

-

开源贡献:作者公开了YOLOv9的源代码,为研究社区提供了一个可以直接使用和进一步研究的高性能对象检测模型。这一开源贡献促进了技术的共享和交流,有助于推动对象检测技术的进一步发展。

-

理论与实践的结合:论文不仅从理论上探讨了PGI和GELAN的设计原理和优势,还通过实际的实验数据展示了这些理论在实践中的应用效果。这种理论与实践相结合的研究方式为解决深度学习中的实际问题提供了有力的证据和灵感。

总之,这篇论文通过提出新的技术方案和实现高性能的模型,为对象检测领域做出了重要的理论和实践贡献,特别是在提高深度学习模型处理复杂视觉任务时的性能和效率方面。

实验结果

结构图

源代码

#!/usr/bin/env python

# -*- coding: utf-8 -*-

'''

@File : Yolov9.py

@Time : 2024/03/04 14:33:12

@Author : CSDN迪菲赫尔曼

@Version : 1.0

@Reference : https://blog.csdn.***/weixin_43694096

@Desc : None

'''

__all__ = ("RepNCSPELAN4", "ADown", "SPPELAN", "CBFuse", "CBLinear", "Silence")

import torch

from torch import nn as nn

import torch.nn.functional as F

from ultralytics.nn.modules.conv import autopad, Conv, RepConv

class RepBottleneck(nn.Module):

"""Rep bottleneck."""

def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):

"""Initializes a RepBottleneck module with customizable in/out channels, shortcut option, groups and expansion

ratio.

"""

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = RepConv(c1, c_, k[0], 1)

self.cv2 = Conv(c_, c2, k[1], 1, g=g)

self.add = shortcut and c1 == c2

def forward(self, x):

"""Forward pass through RepBottleneck layer."""

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class RepCSP(nn.Module):

"""Rep CSP Bottleneck with 3 convolutions."""

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

"""Initializes RepCSP layer with given channels, repetitions, shortcut, groups and expansion ratio."""

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

self.m = nn.Sequential(

*(RepBottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n))

)

def forward(self, x):

"""Forward pass through RepCSP layer."""

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

class RepNCSPELAN4(nn.Module):

"""CSP-ELAN."""

def __init__(self, c1, c2, c3, c4, n=1):

"""Initializes CSP-ELAN layer with specified channel sizes, repetitions, and convolutions."""

super().__init__()

self.c = c3 // 2

self.cv1 = Conv(c1, c3, 1, 1)

self.cv2 = nn.Sequential(RepCSP(c3 // 2, c4, n), Conv(c4, c4, 3, 1))

self.cv3 = nn.Sequential(RepCSP(c4, c4, n), Conv(c4, c4, 3, 1))

self.cv4 = Conv(c3 + (2 * c4), c2, 1, 1)

def forward(self, x):

"""Forward pass through RepNCSPELAN4 layer."""

y = list(self.cv1(x).chunk(2, 1))

y.extend((m(y[-1])) for m in [self.cv2, self.cv3])

return self.cv4(torch.cat(y, 1))

def forward_split(self, x):

"""Forward pass using split() instead of chunk()."""

y = list(self.cv1(x).split((self.c, self.c), 1))

y.extend(m(y[-1]) for m in [self.cv2, self.cv3])

return self.cv4(torch.cat(y, 1))

class ADown(nn.Module):

"""ADown."""

def __init__(self, c1, c2):

"""Initializes ADown module with convolution layers to downsample input from channels c1 to c2."""

super().__init__()

self.c = c2 // 2

self.cv1 = Conv(c1 // 2, self.c, 3, 2, 1)

self.cv2 = Conv(c1 // 2, self.c, 1, 1, 0)

def forward(self, x):

"""Forward pass through ADown layer."""

x = torch.nn.functional.avg_pool2d(x, 2, 1, 0, False, True)

x1, x2 = x.chunk(2, 1)

x1 = self.cv1(x1)

x2 = torch.nn.functional.max_pool2d(x2, 3, 2, 1)

x2 = self.cv2(x2)

return torch.cat((x1, x2), 1)

class SPPELAN(nn.Module):

"""SPP-ELAN."""

def __init__(self, c1, c2, c3, k=5):

"""Initializes SPP-ELAN block with convolution and max pooling layers for spatial pyramid pooling."""

super().__init__()

self.c = c3

self.cv1 = Conv(c1, c3, 1, 1)

self.cv2 = nn.MaxPool2d(kernel_size=k, stride=1, padding=k // 2)

self.cv3 = nn.MaxPool2d(kernel_size=k, stride=1, padding=k // 2)

self.cv4 = nn.MaxPool2d(kernel_size=k, stride=1, padding=k // 2)

self.cv5 = Conv(4 * c3, c2, 1, 1)

def forward(self, x):

"""Forward pass through SPPELAN layer."""

y = [self.cv1(x)]

y.extend(m(y[-1]) for m in [self.cv2, self.cv3, self.cv4])

return self.cv5(torch.cat(y, 1))

class Silence(nn.Module):

"""Silence."""

def __init__(self):

"""Initializes the Silence module."""

super(Silence, self).__init__()

def forward(self, x):

"""Forward pass through Silence layer."""

return x

class CBLinear(nn.Module):

"""CBLinear."""

def __init__(self, c1, c2s, k=1, s=1, p=None, g=1):

"""Initializes the CBLinear module, passing inputs unchanged."""

super(CBLinear, self).__init__()

self.c2s = c2s

self.conv = nn.Conv2d(c1, sum(c2s), k, s, autopad(k, p), groups=g, bias=True)

def forward(self, x):

"""Forward pass through CBLinear layer."""

outs = self.conv(x).split(self.c2s, dim=1)

return outs

class CBFuse(nn.Module):

"""CBFuse."""

def __init__(self, idx):

"""Initializes CBFuse module with layer index for selective feature fusion."""

super(CBFuse, self).__init__()

self.idx = idx

def forward(self, xs):

"""Forward pass through CBFuse layer."""

target_size = xs[-1].shape[2:]

res = [

F.interpolate(x[self.idx[i]], size=target_size, mode="nearest")

for i, x in enumerate(xs[:-1])

]

out = torch.sum(torch.stack(res + xs[-1:]), dim=0)

return out

改进方式

- 在

ultralytics-main/ultralytics/nn/modules文件夹下新建Yolov9.py,将源代码复制进去; - 在

ultralytics-main/ultralytics/nn/tasks.py中输入导包语句;

from ultralytics.nn.modules.Yolov9 import RepNCSPELAN4, ADown, SPPELAN, CBFuse, CBLinear, Silence

- 在

ultralytics-main/ultralytics/nn/tasks.py中添加模块名;

RepNCSPELAN4,

ADown,

SPPELAN,

elif m is CBLinear:

c2 = args[0]

c1 = ch[f]

args = [c1, c2, *args[1:]]

elif m is CBFuse:

c2 = ch[f[-1]]

- 修改

yaml文件,开始训练。

模型yaml文件

YOLOv9c.yaml

# YOLOv9c

# parameters

nc: 80 # number of classes

# gelan backbone

backbone:

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 1, RepNCSPELAN4, [256, 128, 64, 1]] # 2

- [-1, 1, ADown, [256]] # 3-P3/8

- [-1, 1, RepNCSPELAN4, [512, 256, 128, 1]] # 4

- [-1, 1, ADown, [512]] # 5-P4/16

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 1]] # 6

- [-1, 1, ADown, [512]] # 7-P5/32

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 1]] # 8

- [-1, 1, SPPELAN, [512, 256]] # 9

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 1]] # 12

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 1, RepNCSPELAN4, [256, 256, 128, 1]] # 15 (P3/8-small)

- [-1, 1, ADown, [256]]

- [[-1, 12], 1, Concat, [1]] # cat head P4

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 1]] # 18 (P4/16-medium)

- [-1, 1, ADown, [512]]

- [[-1, 9], 1, Concat, [1]] # cat head P5

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 1]] # 21 (P5/32-large)

- [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5)

YOLOv9e.yaml

# YOLOv9e

# parameters

nc: 80 # number of classes

# gelan backbone

backbone:

- [-1, 1, Silence, []]

- [-1, 1, Conv, [64, 3, 2]] # 1-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 2-P2/4

- [-1, 1, RepNCSPELAN4, [256, 128, 64, 2]] # 3

- [-1, 1, ADown, [256]] # 4-P3/8

- [-1, 1, RepNCSPELAN4, [512, 256, 128, 2]] # 5

- [-1, 1, ADown, [512]] # 6-P4/16

- [-1, 1, RepNCSPELAN4, [1024, 512, 256, 2]] # 7

- [-1, 1, ADown, [1024]] # 8-P5/32

- [-1, 1, RepNCSPELAN4, [1024, 512, 256, 2]] # 9

- [1, 1, CBLinear, [[64]]] # 10

- [3, 1, CBLinear, [[64, 128]]] # 11

- [5, 1, CBLinear, [[64, 128, 256]]] # 12

- [7, 1, CBLinear, [[64, 128, 256, 512]]] # 13

- [9, 1, CBLinear, [[64, 128, 256, 512, 1024]]] # 14

- [0, 1, Conv, [64, 3, 2]] # 15-P1/2

- [[10, 11, 12, 13, 14, -1], 1, CBFuse, [[0, 0, 0, 0, 0]]] # 16

- [-1, 1, Conv, [128, 3, 2]] # 17-P2/4

- [[11, 12, 13, 14, -1], 1, CBFuse, [[1, 1, 1, 1]]] # 18

- [-1, 1, RepNCSPELAN4, [256, 128, 64, 2]] # 19

- [-1, 1, ADown, [256]] # 20-P3/8

- [[12, 13, 14, -1], 1, CBFuse, [[2, 2, 2]]] # 21

- [-1, 1, RepNCSPELAN4, [512, 256, 128, 2]] # 22

- [-1, 1, ADown, [512]] # 23-P4/16

- [[13, 14, -1], 1, CBFuse, [[3, 3]]] # 24

- [-1, 1, RepNCSPELAN4, [1024, 512, 256, 2]] # 25

- [-1, 1, ADown, [1024]] # 26-P5/32

- [[14, -1], 1, CBFuse, [[4]]] # 27

- [-1, 1, RepNCSPELAN4, [1024, 512, 256, 2]] # 28

- [-1, 1, SPPELAN, [512, 256]] # 29

# gelan head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 25], 1, Concat, [1]] # cat backbone P4

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 2]] # 32

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 22], 1, Concat, [1]] # cat backbone P3

- [-1, 1, RepNCSPELAN4, [256, 256, 128, 2]] # 35 (P3/8-small)

- [-1, 1, ADown, [256]]

- [[-1, 32], 1, Concat, [1]] # cat head P4

- [-1, 1, RepNCSPELAN4, [512, 512, 256, 2]] # 38 (P4/16-medium)

- [-1, 1, ADown, [512]]

- [[-1, 29], 1, Concat, [1]] # cat head P5

- [-1, 1, RepNCSPELAN4, [512, 1024, 512, 2]] # 41 (P5/32-large)

- [[35, 38, 41], 1, Detect, [nc]] # Detect(P3, P4, P5)

训练结构图

推理结构图

SPPELAN

RepNCSPELAN4

ADown

以上结构图来自于这位大佬:https://github.***/divided7